cuda - Can CPU-process write to memory(UVA) in GPU-RAM allocated by other CPU-process? - Stack Overflow

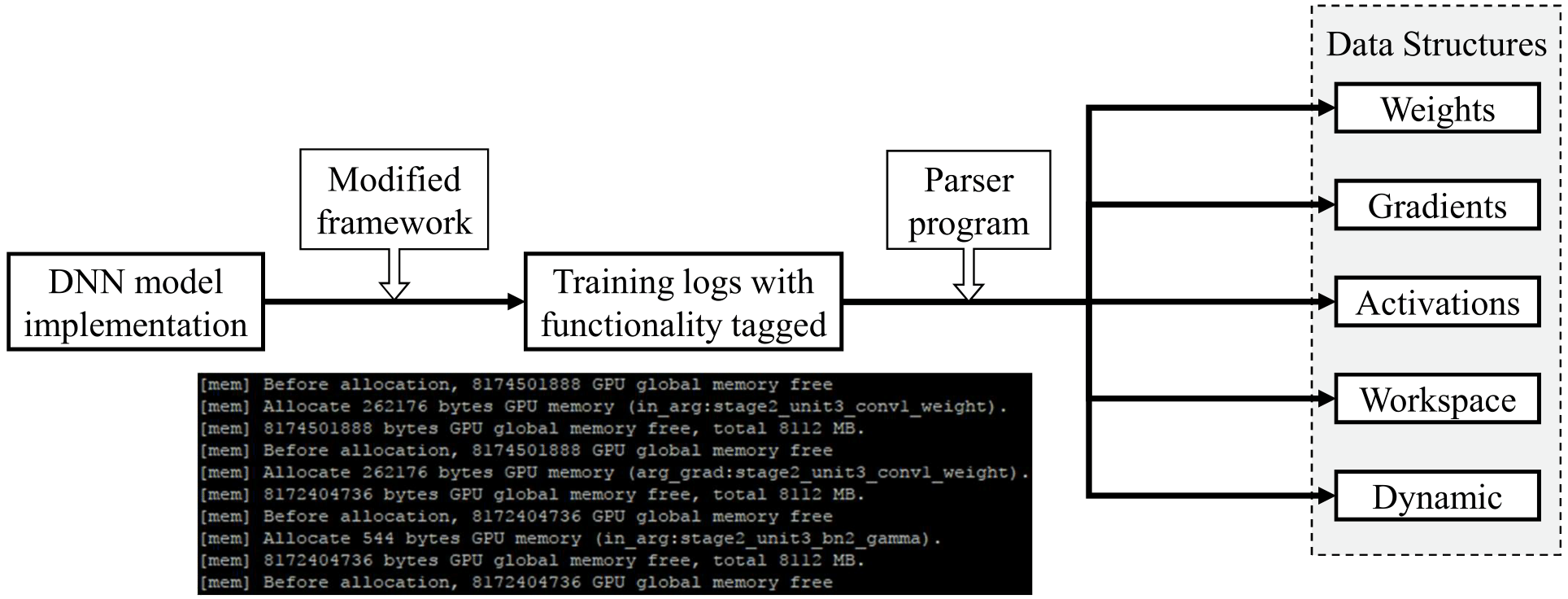

Applied Sciences | Free Full-Text | Efficient Use of GPU Memory for Large-Scale Deep Learning Model Training

When installed using the NVIDIA GPU option, loading a model doesnt increase GPU memory allocation · Issue #1198 · oobabooga/text-generation-webui · GitHub

![PDF] Mosaic: A GPU Memory Manager with Application-Transparent Support for Multiple Page Sizes | Semantic Scholar PDF] Mosaic: A GPU Memory Manager with Application-Transparent Support for Multiple Page Sizes | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/1491af70279814c9aae11f80f44f93349b8bc351/2-Figure1-1.png)

![What Is Shared GPU Memory? [Everything You Need to Know] What Is Shared GPU Memory? [Everything You Need to Know]](https://www.cgdirector.com/wp-content/uploads/media/2022/06/GPU-Memory-Hierarchy.jpg)