Monitor and Improve GPU Usage for Training Deep Learning Models | by Lukas Biewald | Towards Data Science

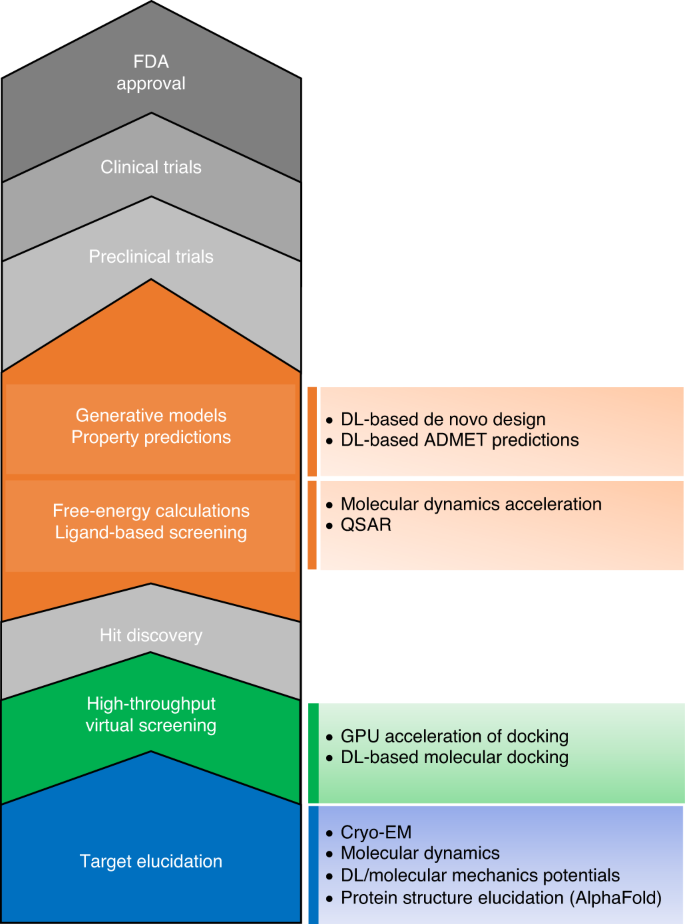

The transformational role of GPU computing and deep learning in drug discovery | Nature Machine Intelligence

Monitor and Improve GPU Usage for Training Deep Learning Models | by Lukas Biewald | Towards Data Science

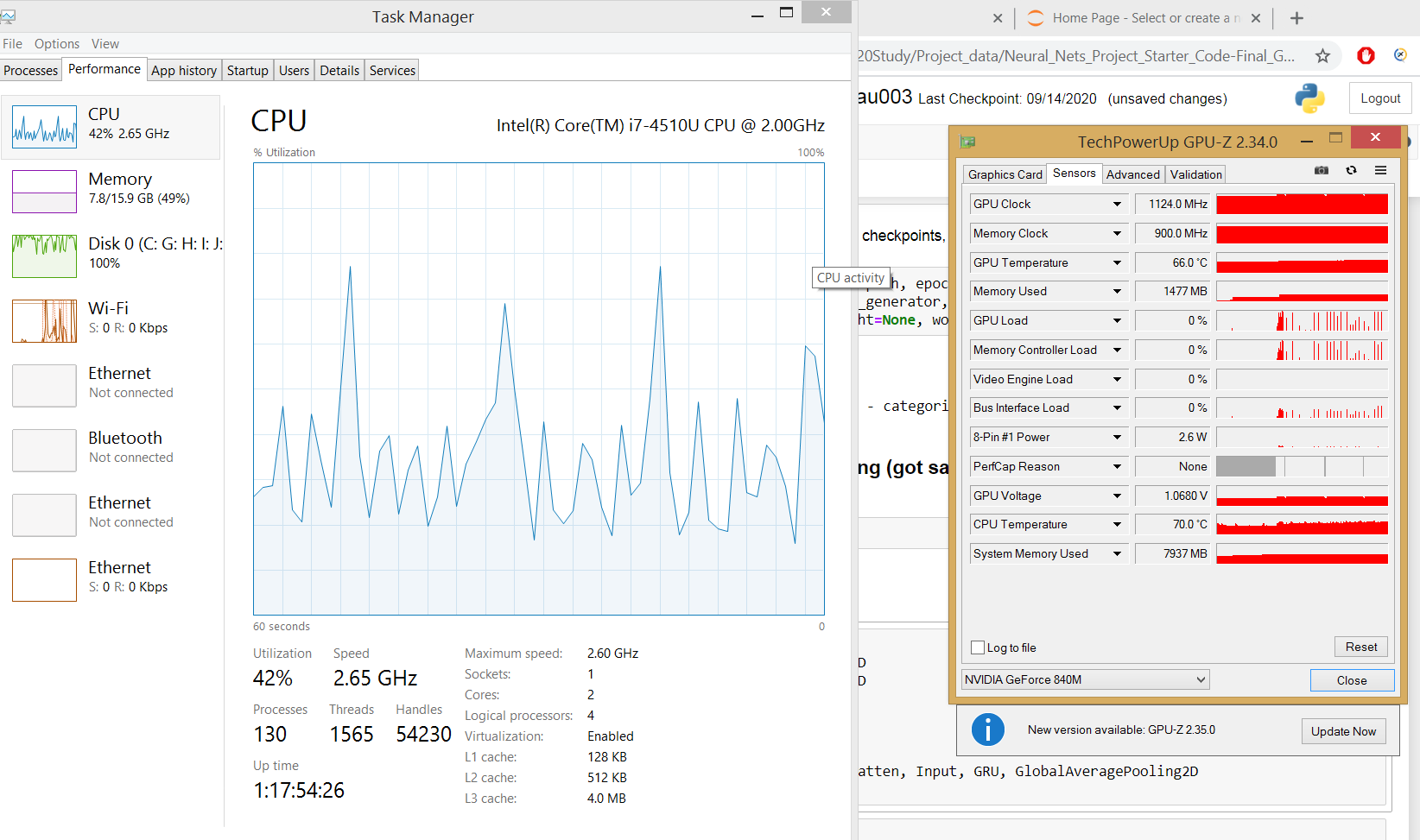

Tracking system resource (GPU, CPU, etc.) utilization during training with the Weights & Biases Dashboard

Monitor and Improve GPU Usage for Training Deep Learning Models | by Lukas Biewald | Towards Data Science

Monitor and Improve GPU Usage for Training Deep Learning Models | by Lukas Biewald | Towards Data Science